Hi agree with the PLS platform might be better suited to what you're wanting to do, or the SVM platform too. You might also consider adding a null factor column (see the autovalidation add-in for JMP) to see if the set of possible predictors actually shows up more often in the model than a completely random and orthogonal null factor.

If you don't have Pro, you'll probably want to try either the partitioning decision tree method or the neural net.Īnother thing: depending on how many rows you have, you might want to generate a validation column stratified on the outcome column and use that for a validation of your model. This might be much better suited with the SVM platform.

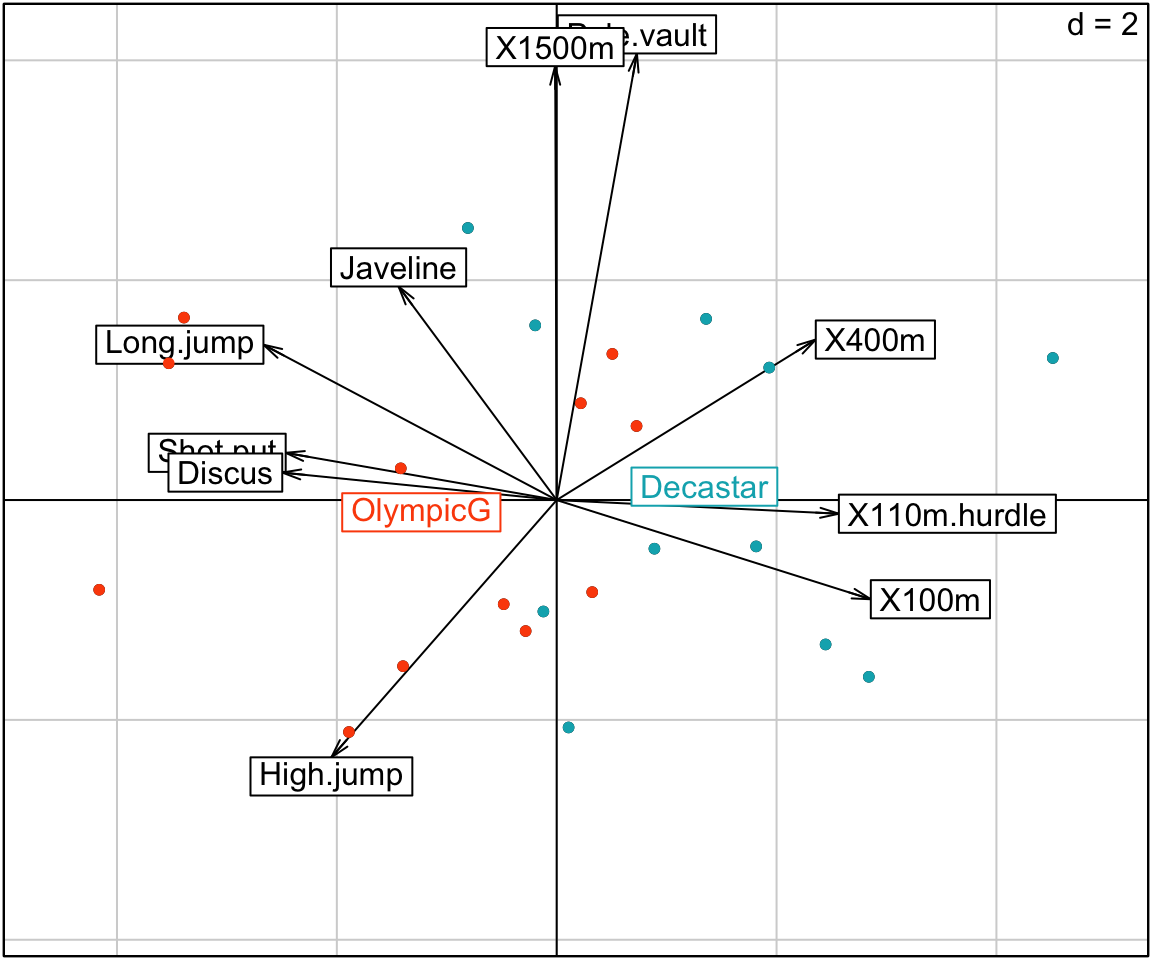

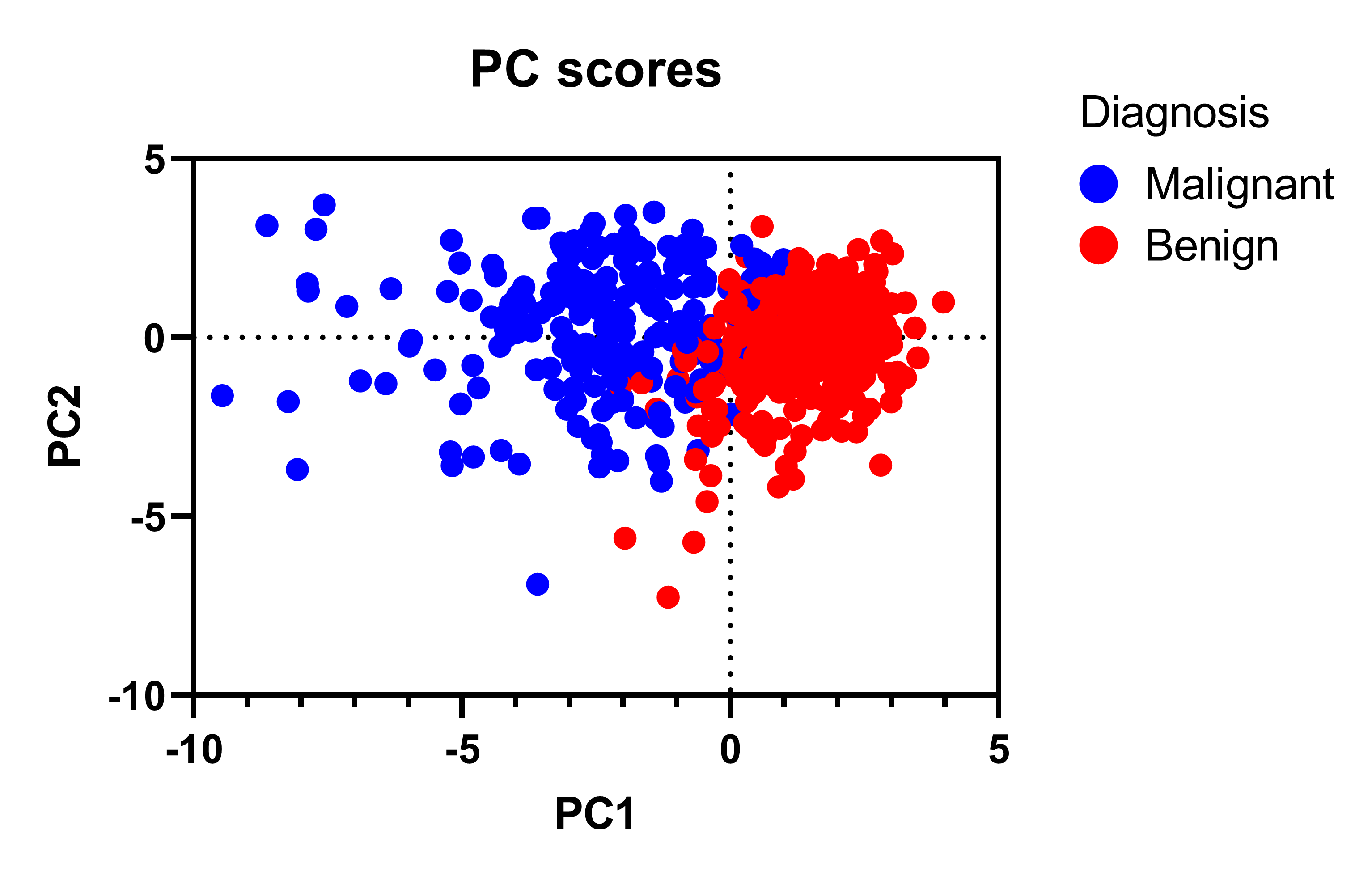

The principle components won't necessarily be good predictors to separate the positive/negatives. You can read up on it in JMP's help here. PCA is really designed to find a set of linearly independent vectors (of the predictor data set - you would not feed it the response data) that maximizes the variability explained in the data along a set of orthogonal principle components. Many of those options depend on what version of JMP you have (e.g. You could even try support vector machines. You might be better off with a decision tree, neural net, or other tree-based methods like XGBoost. The purpose of PCA isn't necessarily for the prediction of an outcome, i.e. Since you have a binary outcome (on/off, 1/0, dead/alive), it sounds more like a logistic regression problem, much like the Titanic survivors example in the sample data in JMP.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed